It looks like you're using an Ad Blocker.

Please white-list or disable AboveTopSecret.com in your ad-blocking tool.

Thank you.

Some features of ATS will be disabled while you continue to use an ad-blocker.

share:

New IO 15 update is not what people think, is another back door to abuse and take away people's privacy in the name of for the littler children.

While it seems like a noble and honest way to keep sexual offenders from gathering photos from lil children, this not all behind this update and is going to get worst for privacy. Scanning your phone without permission looking for child abuse will open the door to scanning for anything else that the government will deem necessary and the door is already open.

The slippery slope

See Apple if up to their necks in China,

So can Apple denied any request by China to get into the technology that is already in the phone? no really because that means China will retaliate,

The truth is a very different version.

So there you have it, Apple is now China Apple and their new upgrade will be abuse by the Chinese communist party eventually.

www.msn.com... pc=U531

While it seems like a noble and honest way to keep sexual offenders from gathering photos from lil children, this not all behind this update and is going to get worst for privacy. Scanning your phone without permission looking for child abuse will open the door to scanning for anything else that the government will deem necessary and the door is already open.

Apple’s new stance on iPhone privacy is troubling – and where is Tim Cook, by the way?all agree on children photos, but what about widely spread photos symbolizing political, religious, or sexual orientations? What if @Apple is asked by governments to add them to the database? a privacy nightmare, great material for our uni courses…

The slippery slope

See Apple if up to their necks in China,

In other words, could a country like China come to Apple and say, you know what, since you all already have a system in place to take action on child porn as informed by a database of specific material, we want to give you another database of material. Instead of CSAM, though, we want you to search for and take action whenever you find something different. That something we want you to look for? Users with non-government-approved content on their devices.

So can Apple denied any request by China to get into the technology that is already in the phone? no really because that means China will retaliate,

The truth is a very different version.

You can't look at anything apple does without looking at how the Chinese government will use it. They are a defacto Chinese company and bound by all it's whims and rules. They can not leave China without destroying their business.

So there you have it, Apple is now China Apple and their new upgrade will be abuse by the Chinese communist party eventually.

www.msn.com... pc=U531

What about people with older ones that don't update any longer?

originally posted by: marg6043

Scanning your phone without permission...

It isn't going to do anything without your permission, every user is going to click 'AGREE' and it will give them permission, otherwise the device won't work and you bought yourself a $1,200 paperweight.

People have to remember that they should not take that cute picture of their kid running around naked avoiding getting caught to dry off after getting

a bath or shower. Even if they just show their spouse, it can be a headache in the future.

Good luck trying to explain to that three year old that that kind of action is not right.....Just don't send that picture to your Hubby or wife.

Good luck trying to explain to that three year old that that kind of action is not right.....Just don't send that picture to your Hubby or wife.

a reply to: AugustusMasonicus

I must agree with you. I have not “given” permission for an update since before the lockdowns last year. I simply say “ remind me later”. It USED to work fine. After reading a report about OPs topic last week I checked my current OS.

Surprise...my phone has been updating all along at some point on its own.

I’m not concerned about them looking for kiddy pics as I don’t have any, but it was alarming that my phone has updated with my permission at least 4 times. Even with the appropriate check marks being tabbed.

I was late joining the apple dumpling gang but this will be the last fruit purchase I ever make. I’ll be going to the “burner” phone from this point forward.

I must agree with you. I have not “given” permission for an update since before the lockdowns last year. I simply say “ remind me later”. It USED to work fine. After reading a report about OPs topic last week I checked my current OS.

Surprise...my phone has been updating all along at some point on its own.

I’m not concerned about them looking for kiddy pics as I don’t have any, but it was alarming that my phone has updated with my permission at least 4 times. Even with the appropriate check marks being tabbed.

I was late joining the apple dumpling gang but this will be the last fruit purchase I ever make. I’ll be going to the “burner” phone from this point forward.

a reply to: BuddytheYorkie

I have the update right now, shut down, I will take it to my tech guy and wipe the cloud, then take all my pictures out, nobody is going to scan my personal pictures, I will be shopping for an android this weekend.

I have the update right now, shut down, I will take it to my tech guy and wipe the cloud, then take all my pictures out, nobody is going to scan my personal pictures, I will be shopping for an android this weekend.

And all the brain dead will say, "I have nothing to hide, I don't care! Its FOR THE CHILDREN!" and not see any reason why this is a terrible, terrible

idea. Just like the Patriot Act. Or using Siri, or Alexa....

I'm convinced (and know it for a fact in France bc Apple got sued) they have some sort of "kill switch" in phones to force people to upgrade their devices. I was thinking of upgrading mine, but now....nah.

I'm convinced (and know it for a fact in France bc Apple got sued) they have some sort of "kill switch" in phones to force people to upgrade their devices. I was thinking of upgrading mine, but now....nah.

originally posted by: BuddytheYorkie

a reply to: AugustusMasonicus

I must agree with you. I have not “given” permission for an update since before the lockdowns last year. I simply say “ remind me later”. It USED to work fine. After reading a report about OPs topic last week I checked my current OS.

Surprise...my phone has been updating all along at some point on its own.

I’m not concerned about them looking for kiddy pics as I don’t have any, but it was alarming that my phone has updated with my permission at least 4 times. Even with the appropriate check marks being tabbed.

I was late joining the apple dumpling gang but this will be the last fruit purchase I ever make. I’ll be going to the “burner” phone from this point forward.

They have a nice little trick where it looks like you're just entering your unlock code, but 'you're agreeing' to update it. Caught it a few times with my wife's phone and other device.

edit on 10-8-2021 by Kaiju666 because: (no reason given)

a reply to: SideEyeEverything

I mean I agree that is ok in the name of the children, but I had no idea how deep in crap they are with the China communist party, this is something that took me by surprised when I read the article, then with the way our government is behaving this days, I can not trust that this update will open the door to allow the powers that be to collect all kind of information also.

Without us knowing about it.

Nope, no, nada.

I mean I agree that is ok in the name of the children, but I had no idea how deep in crap they are with the China communist party, this is something that took me by surprised when I read the article, then with the way our government is behaving this days, I can not trust that this update will open the door to allow the powers that be to collect all kind of information also.

Without us knowing about it.

Nope, no, nada.

a reply to: marg6043

Apple to scan U.S. iPhones for images of child sexual abuse

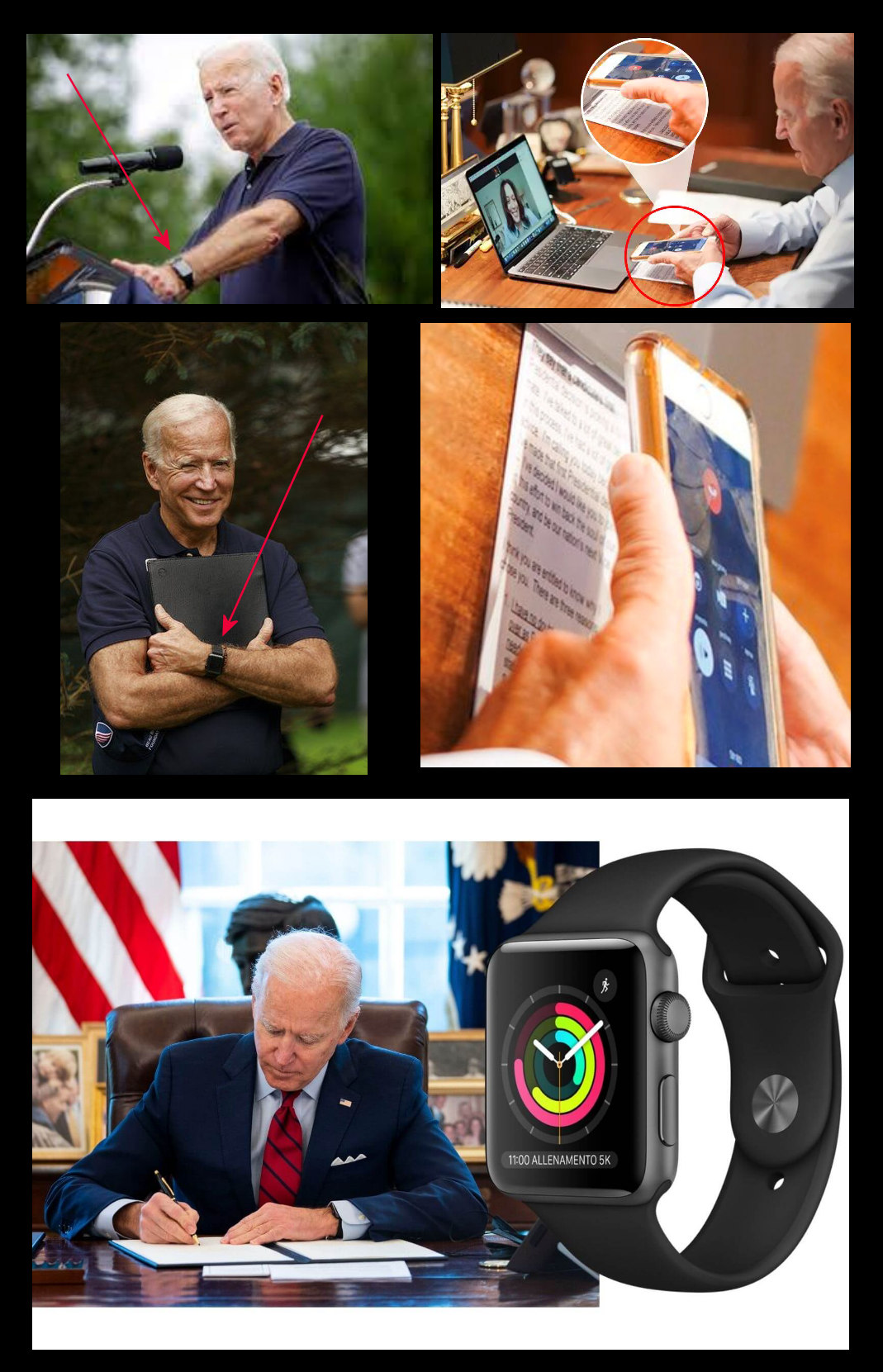

Joe Biden uses an iPhone... and apple watches.

This is not good news for the child sniffer Joe Biden.

Joe Biden is the first US president in history to wear an Apple Watch in the Oval Office. Of course, one could argue that only his two predecessors would have been able to do so anyway (since the watch only got launched in 2014).Feb 16, 2021

Joe Biden actually doesn't know how to use an iPhone as you can see in above photo, he is holding it upside down or maybe he is looking for a sniffer button?

Apple to scan U.S. iPhones for images of child sexual abuse

Joe Biden uses an iPhone... and apple watches.

This is not good news for the child sniffer Joe Biden.

Joe Biden is the first US president in history to wear an Apple Watch in the Oval Office. Of course, one could argue that only his two predecessors would have been able to do so anyway (since the watch only got launched in 2014).Feb 16, 2021

Joe Biden actually doesn't know how to use an iPhone as you can see in above photo, he is holding it upside down or maybe he is looking for a sniffer button?

edit on 49084931pm312021Tue, 10 Aug 2021 12:49:32 -0500 by imitator because: (no reason given)

I wonder if they have done the same with their computers?

Why wouldn't they?

Why wouldn't they?

I dont understand why people still have smart phones. I use my Lite Phone and have a desktop for anything I need to do online.

I don't like the direction it's heading but to be fair, the features they are looking to integrate with iOS 15 are optional.

Apple Remains Committed to Launching New Child Safety Features Later This Year

It seems, for now, you can opt out of enabling these features.

More on the way it works from Apple's Privacy Chief, Erik Neuenschwander:

First, an optional Communication Safety feature in the Messages app on iPhone, iPad, and Mac can warn children and their parents when receiving or sending sexually explicit photos. When the feature is enabled, Apple said the Messages app will use on-device machine learning to analyze image attachments, and if a photo is determined to be sexually explicit, the photo will be automatically blurred and the child will be warned.

Second, Apple will be able to detect known Child Sexual Abuse Material (CSAM) images stored in iCloud Photos, enabling Apple to report these instances to the National Center for Missing and Exploited Children (NCMEC), a non-profit organization that works in collaboration with U.S. law enforcement agencies. Apple confirmed today that the process will only apply to photos being uploaded to iCloud Photos and not videos.

Third, Apple will be expanding guidance in Siri and Spotlight Search across devices by providing additional resources to help children and parents stay safe online and get help with unsafe situations. For example, users who ask Siri how they can report CSAM or child exploitation will be pointed to resources for where and how to file a report.

Apple Remains Committed to Launching New Child Safety Features Later This Year

It seems, for now, you can opt out of enabling these features.

More on the way it works from Apple's Privacy Chief, Erik Neuenschwander:

The hash list is built into the operating system, we have one global operating system and don't have the ability to target updates to individual users and so hash lists will be shared by all users when the system is enabled. And secondly, the system requires the threshold of images to be exceeded so trying to seek out even a single image from a person's device or set of people's devices won't work because the system simply does not provide any knowledge to Apple for single photos stored in our service. And then, thirdly, the system has built into it a stage of manual review where, if an account is flagged with a collection of illegal CSAM material, an Apple team will review that to make sure that it is a correct match of illegal CSAM material prior to making any referral to any external entity. And so the hypothetical requires jumping over a lot of hoops, including having Apple change its internal process to refer material that is not illegal, like known CSAM and that we don't believe that there's a basis on which people will be able to make that request in the U.S. And the last point that I would just add is that it does still preserve user choice, if a user does not like this kind of functionality, they can choose not to use iCloud Photos and if iCloud Photos is not enabled, no part of the system is functional.

edit on 10-8-2021 by jtrenthacker because: (no reason given)

Not willing to give up another freedom of any type.

Not for the kids or any other reason.

Are you kidding me ? You want to scan my picks ??

Go duck yourself Apple.

Citizens should be up in arms on the intrusion.

We must draw the line. Not another inch.

Preserve your rights.

Not for the kids or any other reason.

Are you kidding me ? You want to scan my picks ??

Go duck yourself Apple.

Citizens should be up in arms on the intrusion.

We must draw the line. Not another inch.

Preserve your rights.

a reply to: jtrenthacker

I still do not trust what Apple is been asked to do by the government in the US, they will integrate the new system and it will get worst once is done, let no forget how China can abuse this too.

Soo nope, I will be shopping for android.

I still do not trust what Apple is been asked to do by the government in the US, they will integrate the new system and it will get worst once is done, let no forget how China can abuse this too.

Soo nope, I will be shopping for android.

This is just the beginning, next it will look for the type of guns you have, legal and illegal activity and what your house and your friends houses

looks like inside, so the raid they do is safer for them. All from the photos you take that go to your cloud. Nice.

new topics

-

Late Night with the Devil - a really good unusual modern horror film.

Movies: 19 minutes ago -

Cats Used as Live Bait to Train Ferocious Pitbulls in Illegal NYC Dogfighting

Social Issues and Civil Unrest: 1 hours ago -

The Good News According to Jesus - Episode 1

Religion, Faith, And Theology: 3 hours ago -

HORRIBLE !! Russian Soldier Drinking Own Urine To Survive In Battle

World War Three: 5 hours ago -

Bobiverse

Fantasy & Science Fiction: 8 hours ago -

Florida man's trip overseas ends in shock over $143,000 T-Mobile phone bill

Social Issues and Civil Unrest: 8 hours ago -

Former Labour minister Frank Field dies aged 81

People: 10 hours ago

top topics

-

President BIDEN Vows to Make Americans Pay More Federal Taxes in 2025 - Political Suicide.

2024 Elections: 17 hours ago, 19 flags -

Florida man's trip overseas ends in shock over $143,000 T-Mobile phone bill

Social Issues and Civil Unrest: 8 hours ago, 8 flags -

SETI chief says US has no evidence for alien technology. 'And we never have'

Aliens and UFOs: 12 hours ago, 7 flags -

This is our Story

General Entertainment: 15 hours ago, 4 flags -

Former Labour minister Frank Field dies aged 81

People: 10 hours ago, 4 flags -

Cats Used as Live Bait to Train Ferocious Pitbulls in Illegal NYC Dogfighting

Social Issues and Civil Unrest: 1 hours ago, 4 flags -

Bobiverse

Fantasy & Science Fiction: 8 hours ago, 3 flags -

HORRIBLE !! Russian Soldier Drinking Own Urine To Survive In Battle

World War Three: 5 hours ago, 2 flags -

Late Night with the Devil - a really good unusual modern horror film.

Movies: 19 minutes ago, 1 flags -

The Good News According to Jesus - Episode 1

Religion, Faith, And Theology: 3 hours ago, 0 flags

active topics

-

Silent Moments --In Memory of Beloved Member TDDA

Short Stories • 70 • : zosimov -

-@TH3WH17ERABB17- -Q- ---TIME TO SHOW THE WORLD--- -Part- --44--

Dissecting Disinformation • 650 • : angelchemuel -

Thousands Of Young Ukrainian Men Trying To Flee The Country To Avoid Conscription And The War

Other Current Events • 135 • : twistedpuppy -

President BIDEN Vows to Make Americans Pay More Federal Taxes in 2025 - Political Suicide.

2024 Elections • 89 • : DBCowboy -

Late Night with the Devil - a really good unusual modern horror film.

Movies • 0 • : devilhunter69 -

New whistleblower Jason Sands speaks on Twitter Spaces last night.

Aliens and UFOs • 52 • : 0bserver1 -

SETI chief says US has no evidence for alien technology. 'And we never have'

Aliens and UFOs • 33 • : 0bserver1 -

Mood Music Part VI

Music • 3099 • : BrucellaOrchitis -

HORRIBLE !! Russian Soldier Drinking Own Urine To Survive In Battle

World War Three • 20 • : budzprime69 -

Cats Used as Live Bait to Train Ferocious Pitbulls in Illegal NYC Dogfighting

Social Issues and Civil Unrest • 2 • : Cre8chaos79