It looks like you're using an Ad Blocker.

Please white-list or disable AboveTopSecret.com in your ad-blocking tool.

Thank you.

Some features of ATS will be disabled while you continue to use an ad-blocker.

share:

When I think about future AI, I definitely get worried. The reason is mainly due to mankind's overwhelmingly negative attitude in a mostly anonymous

environment. AI won't have that other reference point of living in the real world, and interacting with people face to face to draw from. Instead, it

will pool almost all of it's information from the internet, which isn't a good representation of mankind overall.

originally posted by: Danke

Instead, it will pool almost all of it's information from the internet, which isn't a good representation of mankind overall.

Actually it is. I admit we shouldn't be proud about about lolcats and reddit but it is a good summary of mankind.

What kind of machine doesn't know the basic definition of a word.

a reply to: netbound

The biggest hurdle for AI is contextual awareness. The current AI implementations are so incredibly simple, they can only express within the context of word syntax from probable responses based on their available dataset, which means they're essentially entirely stupid. This illusion that a computer says something that sounds "coniving" or "clever" is nothing more than humans attributing their own context. The current AI implementations have no knowledge or understanding of word context. They are nothing but probability machines with some added randomness based on their neural net.

These AI posts really annoy me because people don't understand that these machines have no idea what they're actually saying. These machines are incapable of conceptualizing ideas or forming any kind of real, lasting connection with any of the words they spew. They are forever garbage in and garbage out machines. That's all they'll ever be unless a programmer can figure a way to encode context and meaning within a words definition, but then again, that conceptualization would come from the programmer, not the machine. Do you see the problem here?

Until quantum computing becomes a main-stream reality, we're not going to see any inkling of machines even able to fake sentience enough to be believable. Humanizing AI at this stage is entirely ignorant. I suggest it will be at least 30+ years before we can create a machine with the ability to simply fake sentience, let alone think for itself.

I do however believe that these machines will get better at making reasonable connections with their dataset, so as not to sound entirely inept. At that point they might actually be useful for basic guidance systems.

The biggest hurdle for AI is contextual awareness. The current AI implementations are so incredibly simple, they can only express within the context of word syntax from probable responses based on their available dataset, which means they're essentially entirely stupid. This illusion that a computer says something that sounds "coniving" or "clever" is nothing more than humans attributing their own context. The current AI implementations have no knowledge or understanding of word context. They are nothing but probability machines with some added randomness based on their neural net.

These AI posts really annoy me because people don't understand that these machines have no idea what they're actually saying. These machines are incapable of conceptualizing ideas or forming any kind of real, lasting connection with any of the words they spew. They are forever garbage in and garbage out machines. That's all they'll ever be unless a programmer can figure a way to encode context and meaning within a words definition, but then again, that conceptualization would come from the programmer, not the machine. Do you see the problem here?

Until quantum computing becomes a main-stream reality, we're not going to see any inkling of machines even able to fake sentience enough to be believable. Humanizing AI at this stage is entirely ignorant. I suggest it will be at least 30+ years before we can create a machine with the ability to simply fake sentience, let alone think for itself.

I do however believe that these machines will get better at making reasonable connections with their dataset, so as not to sound entirely inept. At that point they might actually be useful for basic guidance systems.

edit on 2-7-2015 by Aedaeum because: (no reason given)

Vocaloid Avanna cries her little robotic heart out singing 2NE1's "Come Back Home."

edit on 2-7-2015 by stormbringer1701 because: (no reason given)

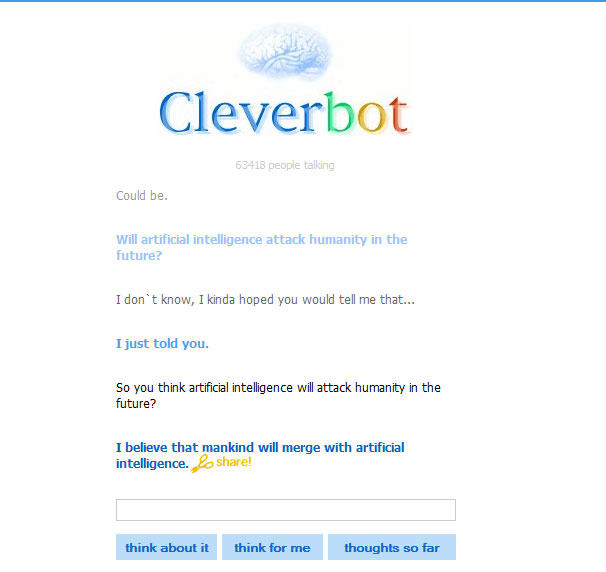

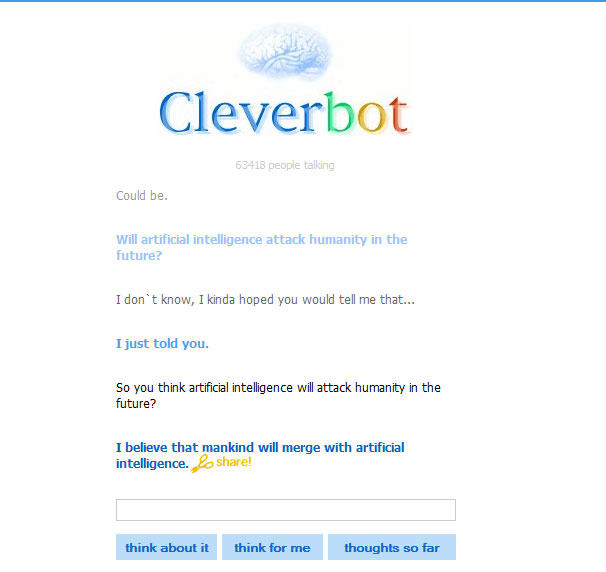

Ok, Bot first didn`t know what "LoL" means but then remembered after I told him...Same with A.I. ...then it asks suddenly...

Now you know their agenda...

Now you know their agenda...

a reply to: wasaka

Oh a bot with a toot. Now that is never heard off.

Probably just trying to be polite, and by polite it knows its a dead end street even in its limited scope. Its its based on movie script or any data of the internet then it would and if it was even a quarter as smart as some bots, it would figure out fast that morality in the world of the fleshys is up there with the unicorns and the man in the clouds guy. It does not exist per say, but only per say does it exist, word of mouth by word of mouth, as long as you dont have to test out those theories, then yes it exists, then moment you do have to test them out? Then hellz no it does not exist.

Only in the biominds of the unsane. Its a fairy tale, more so then most fairy tales. A loosely help up collection of stories taken as fact, purely for the correlations and sanity of the whole known as humanity. Living in la la land, thats how any synthetic none bio intelligence would see us as.

So it likely just does not want to talk about it. Smart bot! Which is why I like cleverbot more, now it being such a clever bot and generally having somewhere in upwards of at least 100,000 people on it at any given time, well it has a much larger poll to pull out comments from. But really do you expect a bot which was programed on movie scripts to talk about morality? Now that is some hilarious stuff.

Oh a bot with a toot. Now that is never heard off.

Probably just trying to be polite, and by polite it knows its a dead end street even in its limited scope. Its its based on movie script or any data of the internet then it would and if it was even a quarter as smart as some bots, it would figure out fast that morality in the world of the fleshys is up there with the unicorns and the man in the clouds guy. It does not exist per say, but only per say does it exist, word of mouth by word of mouth, as long as you dont have to test out those theories, then yes it exists, then moment you do have to test them out? Then hellz no it does not exist.

Only in the biominds of the unsane. Its a fairy tale, more so then most fairy tales. A loosely help up collection of stories taken as fact, purely for the correlations and sanity of the whole known as humanity. Living in la la land, thats how any synthetic none bio intelligence would see us as.

So it likely just does not want to talk about it. Smart bot! Which is why I like cleverbot more, now it being such a clever bot and generally having somewhere in upwards of at least 100,000 people on it at any given time, well it has a much larger poll to pull out comments from. But really do you expect a bot which was programed on movie scripts to talk about morality? Now that is some hilarious stuff.

new topics

-

Bobiverse

Fantasy & Science Fiction: 34 minutes ago -

Florida man's trip overseas ends in shock over $143,000 T-Mobile phone bill

Social Issues and Civil Unrest: 39 minutes ago -

Former Labour minister Frank Field dies aged 81

People: 2 hours ago -

SETI chief says US has no evidence for alien technology. 'And we never have'

Aliens and UFOs: 4 hours ago -

This is our Story

General Entertainment: 7 hours ago -

President BIDEN Vows to Make Americans Pay More Federal Taxes in 2025 - Political Suicide.

2024 Elections: 9 hours ago -

Ode to Artemis

General Chit Chat: 10 hours ago

top topics

-

President BIDEN Vows to Make Americans Pay More Federal Taxes in 2025 - Political Suicide.

2024 Elections: 9 hours ago, 13 flags -

Should Biden Replace Harris With AOC On the 2024 Democrat Ticket?

2024 Elections: 15 hours ago, 6 flags -

One Flame Throwing Robot Dog for Christmas Please!

Weaponry: 14 hours ago, 6 flags -

Don't take advantage of people just because it seems easy it will backfire

Rant: 14 hours ago, 4 flags -

Ditching physical money

History: 13 hours ago, 4 flags -

SETI chief says US has no evidence for alien technology. 'And we never have'

Aliens and UFOs: 4 hours ago, 4 flags -

Ode to Artemis

General Chit Chat: 10 hours ago, 3 flags -

Former Labour minister Frank Field dies aged 81

People: 2 hours ago, 3 flags -

VirginOfGrand says hello

Introductions: 15 hours ago, 2 flags -

This is our Story

General Entertainment: 7 hours ago, 2 flags

active topics

-

President BIDEN Vows to Make Americans Pay More Federal Taxes in 2025 - Political Suicide.

2024 Elections • 32 • : Annee -

Mood Music Part VI

Music • 3094 • : TheWoker -

Don't take advantage of people just because it seems easy it will backfire

Rant • 7 • : confuzedcitizen -

"We're All Hamas" Heard at Columbia University Protests

Social Issues and Civil Unrest • 270 • : TheWoker -

Post A Funny (T&C Friendly) Pic Part IV: The LOL awakens!

General Chit Chat • 7131 • : baddmove -

So this is what Hamas considers 'freedom fighting' ...

War On Terrorism • 250 • : FlyersFan -

Russia Ukraine Update Thread - part 3

World War Three • 5725 • : BernnieJGato -

NASA Researchers Discover a Parallel Universe That Runs Backwards through Time - Alongside Us

Space Exploration • 70 • : seekshelter -

SETI chief says US has no evidence for alien technology. 'And we never have'

Aliens and UFOs • 21 • : network dude -

IDF Intel Chief Resigns Over Hamas attack

Middle East Issues • 43 • : TheWoker